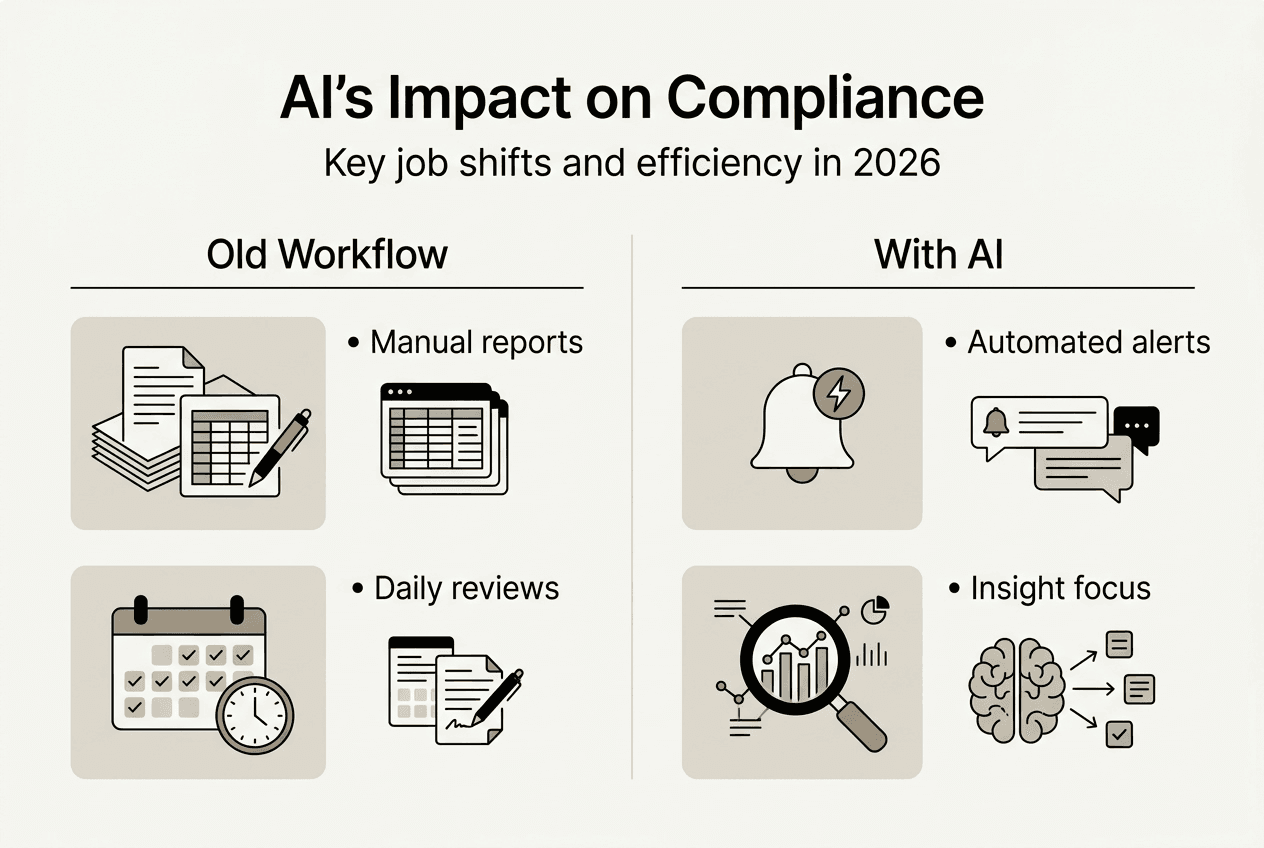

AI in compliance: Transform your 2026 strategy

- Леонид Ложкарев

- Mar 13

- 8 min read

AI adoption in compliance has exploded, with over 50% of compliance officers now actively using or testing AI tools in 2026. This rapid shift challenges traditional assumptions about compliance work and creates both opportunities and uncertainties. Many professionals wonder whether AI will replace human judgment or simply enhance it. The reality is more nuanced. AI is transforming compliance from a reactive, manual discipline into a proactive, data-driven function that empowers professionals to focus on strategic risk management. This article explores how AI reshapes compliance today, the tangible benefits it delivers, governance challenges you must navigate, and practical steps to integrate AI successfully into your compliance program.

Table of Contents

Key takeaways

Point | Details |

Rapid AI adoption | Over 50% of compliance professionals now use AI tools, up from 30% in 2023. |

Diverse applications | AI enhances policy development, training, monitoring, communications oversight, and transaction processing. |

Significant efficiency gains | AI reduces false positives by over 90% and cuts transaction processing times by 40% in some cases. |

Governance is critical | With 157 new AI laws introduced in 2025 alone, robust governance frameworks are essential. |

Strategic transformation | AI shifts compliance from manual tasks to strategic, data-driven risk management. |

How AI is transforming compliance today

The compliance landscape has shifted dramatically. More than 56% of compliance experts reported using AI in 2024, jumping from 41% the year prior. By 2026, over 50% of compliance officers actively deploy AI solutions across their organizations. This acceleration reflects AI’s proven value in automating high-frequency, standardized tasks that once consumed countless hours of manual effort.

AI now handles diverse compliance functions:

Improving and updating compliance policies based on regulatory changes

Enhancing training programs through personalized learning paths

Monitoring third-party vendor compliance and risk exposure

Overseeing communications for insider trading, market manipulation, and conduct violations

Automating data collection, report generation, and anomaly detection

These applications free compliance professionals from repetitive work, allowing them to concentrate on interpreting insights and managing complex risks. AI excels at processing massive datasets quickly, identifying patterns humans might miss, and flagging potential issues before they escalate. This capability is particularly valuable in financial services, where transaction volumes and regulatory complexity create overwhelming manual workloads.

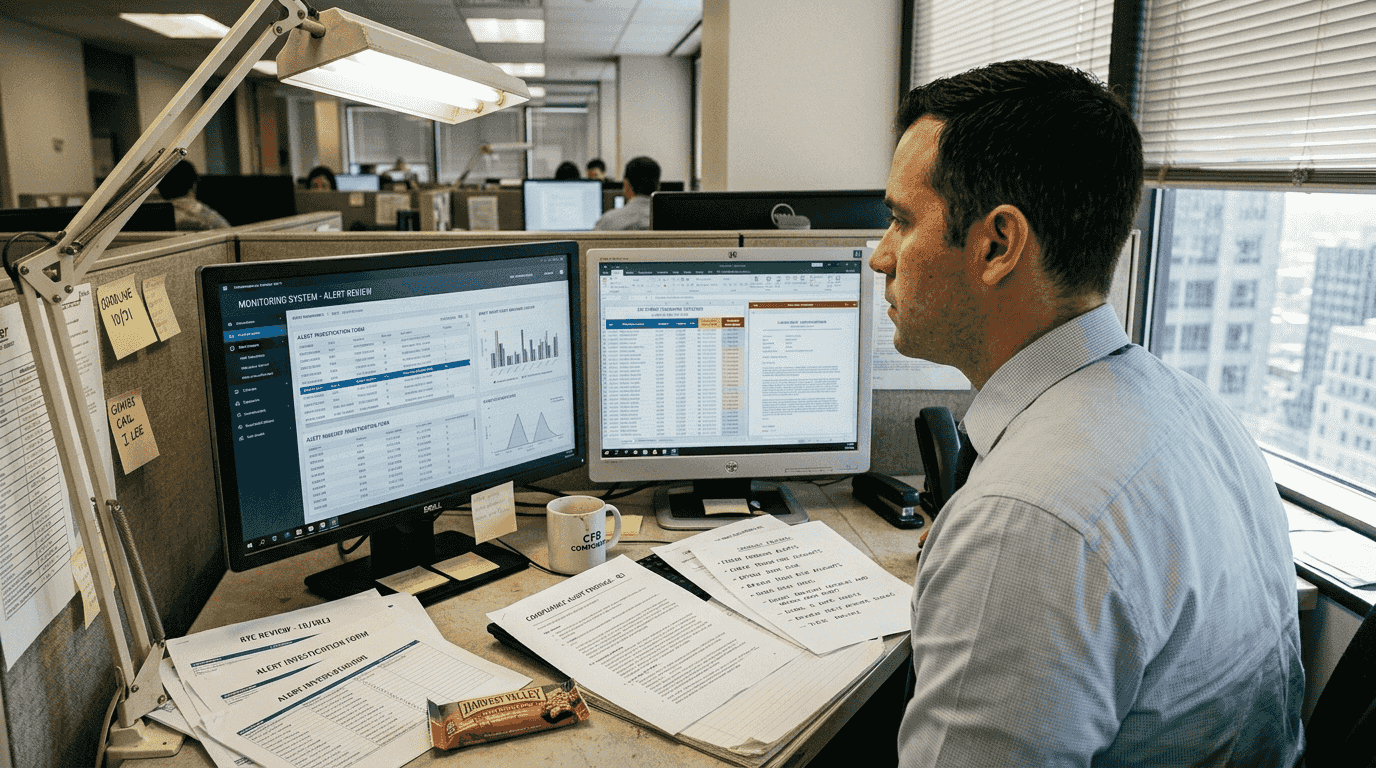

The shift from manual to automated compliance changes job roles fundamentally. Instead of spending days compiling reports or reviewing routine transactions, compliance officers now analyze AI-generated insights, validate findings, and make strategic decisions. This evolution aligns with broader risk management best practices that emphasize proactive identification and mitigation of threats. As organizations adopt advanced AI tools in internal auditing, compliance teams gain powerful allies in maintaining regulatory adherence while supporting business objectives.

Pro Tip: Start with high-volume, rules-based processes when piloting AI in compliance. These areas deliver quick wins and build organizational confidence in AI capabilities.

Benefits and practical applications of AI in compliance

AI delivers measurable advantages that transform compliance operations. Consider Customer Due Diligence (CDD) and transaction monitoring, traditionally labor-intensive processes requiring multiple manual handoffs and reviews. AI-powered systems automate these workflows end to end, eliminating delays and reducing human error. The results are striking.

AI-powered solutions reduce false positives in AML systems by over 90%, enabling analysts to focus on genuine threats rather than chasing irrelevant alerts. One APAC bank saved $1 million annually and cut transaction processing times by 40% using AI-driven compliance automation. These improvements directly impact operational efficiency and regulatory effectiveness.

Top AI use cases and their impact:

Use Case | Key Benefit | Typical Impact |

Transaction monitoring | Faster processing, fewer false positives | 40% time reduction, 90%+ accuracy gain |

Sanctions screening | Enhanced accuracy, reduced analyst workload | 91.8% false positive reduction |

Regulatory reporting | Automated data aggregation and validation | 60% faster report generation |

Policy updates | Real-time regulatory change tracking | 50% faster policy revision cycles |

Training personalization | Adaptive learning paths based on role and risk | 35% improvement in knowledge retention |

The efficiency gains extend beyond speed. AI improves accuracy by applying consistent logic across all cases, eliminating the variability inherent in manual reviews. When analysts spend less time on false positives, they can investigate genuine risks more thoroughly, improving overall compliance quality. This balance between strict regulation and operational agility is crucial in competitive markets where delays cost money and reputational damage.

AI also enhances compliance auditing for risk reduction by continuously monitoring controls and flagging deviations in real time. Traditional audits happen periodically, creating windows where issues can develop undetected. AI-driven monitoring provides ongoing assurance, catching problems early when they are easier and cheaper to fix. This proactive approach aligns with practical regulatory compliance examples that emphasize prevention over remediation.

Pro Tip: Measure AI performance against baseline metrics from your pre-AI processes. Quantifying improvements in false positive rates, processing times, and cost savings builds the business case for expanded AI adoption.

Navigating AI governance and regulatory challenges

AI’s benefits come with significant governance responsibilities. Regulatory landscapes are evolving rapidly. In 2025 alone, 157 new AI-related laws were introduced worldwide, adding layers of complexity to compliance functions already stretched thin. The AI governance market is projected to grow at a CAGR of up to 49.2% through 2034, reflecting the urgent need for robust frameworks.

Organizations face real challenges keeping pace. It still takes more than a year on average for organizations to fully implement regulatory changes. This lag creates risk exposure, especially as regulators increasingly scrutinize AI systems for bias, transparency, and accountability. AI governance is no longer optional. It is a critical component of building proactive, resilient compliance systems that can adapt to regulatory shifts without massive disruption.

Key governance considerations include:

Ethical use: Ensuring AI decisions align with organizational values and legal standards

Transparency: Documenting how AI models make decisions and providing explainability to regulators and stakeholders

Bias mitigation: Regularly testing AI systems for discriminatory outcomes and correcting data or algorithm issues

Continuous monitoring: Tracking AI performance over time to detect drift, errors, or unintended consequences

Human oversight: Maintaining human judgment in high-stakes decisions and validating AI recommendations

Data privacy: Protecting sensitive information used to train and operate AI systems

These considerations require cross-functional collaboration between compliance, legal, IT, and business units. Siloed approaches fail because AI impacts multiple domains simultaneously. Effective governance integrates AI risk management into existing compliance frameworks while recognizing AI’s unique characteristics.

“The conversation has shifted from whether AI should be governed to how organizations can govern it responsibly while maximizing its value in compliance operations.”

This shift demands new skills and mindsets. Compliance professionals must understand AI capabilities and limitations, ask the right questions of data scientists, and translate technical concepts into business and regulatory language. Developing an AI usage strategy for internal audit provides a roadmap for addressing these challenges systematically. Similarly, strengthening compliance management in finance ensures AI governance aligns with broader financial controls and risk management practices.

Best practices for integrating AI into your compliance program

Successful AI integration requires strategic planning and disciplined execution. Success depends on matching AI use cases to specific business needs and ensuring compliance professional input in AI design. Rushing into AI without clear objectives or governance wastes resources and creates new risks.

Aspect | Traditional Compliance | AI-Driven Compliance |

Monitoring | Periodic manual reviews | Continuous automated surveillance |

Reporting | Manual data aggregation | Automated report generation with real-time updates |

Training | Generic, annual programs | Personalized, adaptive learning paths |

Risk detection | Reactive, based on known patterns | Proactive, identifies emerging patterns |

Resource allocation | Spread across routine tasks | Focused on high-value analysis and strategy |

Follow these steps to integrate AI effectively:

Assess current processes: Identify high-volume, rules-based tasks where AI can deliver quick wins and build momentum.

Define clear objectives: Set measurable goals for efficiency, accuracy, cost reduction, or risk mitigation that AI should achieve.

Launch pilot programs: Test AI in controlled environments, gather feedback, and refine before scaling across the organization.

Establish governance frameworks: Create policies for AI use, oversight, bias testing, and performance monitoring from day one.

Train your team: Equip compliance professionals with skills to interpret AI outputs, validate findings, and manage AI systems.

Monitor and iterate: Continuously measure AI performance against objectives, adjust models as needed, and stay responsive to regulatory changes.

Engage stakeholders: Involve business leaders, IT, legal, and auditors early to ensure alignment and secure necessary resources.

Balancing innovation with risk mitigation is critical. AI offers powerful capabilities, but deploying it without proper controls invites regulatory scrutiny and operational failures. Start small, prove value, and expand deliberately. This approach minimizes disruption while building organizational competence and confidence.

Pro Tip: Engage seasoned compliance professionals to provide continuous feedback and validation to AI systems. Their domain expertise ensures AI recommendations remain relevant, accurate, and aligned with regulatory expectations.

Transforming auditing practices with AI requires understanding both the technology’s potential and its limitations. AI excels at pattern recognition and processing speed but lacks human judgment, contextual understanding, and ethical reasoning. Recognizing the risks of AI transcription in sensitive situations like auditing interviews highlights the importance of balancing innovation with human oversight.

Enhance your compliance expertise with specialized AI training

Staying ahead in AI-driven compliance requires continuous learning and skill development. As AI reshapes job roles and expectations, compliance professionals must deepen their understanding of AI technologies, governance frameworks, and strategic applications. Specialized training bridges the gap between current capabilities and future demands.

Explore targeted CPE courses designed specifically for auditors and compliance professionals navigating AI integration. Access live webinars and in-person events across multiple U.S. cities, led by industry experts with Big 4 backgrounds who bring practical, standards-based instruction. Whether you are building foundational AI knowledge or advancing to complex governance and risk management topics, the right training accelerates your professional growth and organizational impact. Check the 2026 CPE event calendar for upcoming sessions, review internal auditor CPE webinars for flexible learning options, and discover why expert CPE training at CCS is trusted by compliance professionals nationwide.

Frequently asked questions

What are the main risks of using AI in compliance?

AI introduces risks including algorithmic bias, false positives or negatives, lack of transparency in decision-making, and data privacy concerns. Without proper governance, AI systems can perpetuate existing biases in training data, leading to discriminatory outcomes. Models may also drift over time, producing inaccurate results if not monitored continuously. Human oversight remains essential to validate AI recommendations and ensure decisions align with ethical and legal standards.

How can AI reduce false positives in anti-money laundering (AML) compliance?

AI refines screening by analyzing complex patterns and contextual factors beyond static rules, minimizing unnecessary alerts. Machine learning models adapt to emerging threats and reduce noise from irrelevant matches. AI-powered solutions achieved a 91.8% reduction in false positives for sanctions screening, enabling analysts to focus on genuine risks and delivering significant cost savings.

What steps should organizations take to govern AI in compliance effectively?

Establish clear policies on AI use, monitor performance continuously, engage compliance experts in system design, and stay updated on evolving regulations. Regular audits and bias mitigation are essential to maintain trust and effectiveness. The AI governance market is rapidly growing, emphasizing the need for strong frameworks to manage ethical and regulatory risks. Cross-functional collaboration ensures AI governance integrates seamlessly with existing compliance and risk management practices.

How does AI change the role of compliance professionals?

Compliance professionals move from repetitive work to interpreting AI insights, managing governance, and anticipating risks. This evolution enhances strategic value and demands new skills in data analysis, AI literacy, and ethical decision-making. AI is transforming compliance from a manual, rules-based function into a data-driven, responsive discipline. Continuous learning and adaptation are critical as AI capabilities and regulatory expectations evolve.

Recommended

Comments